How robots can tell how clean is ‘clean’

SUTD - Thejus Pathmakumar, Manivannan Kalimuthu, Balakrishnan Ramalingam and Mohan Rajesh Elara

By giving the touch-and-inspect method a smart update, SUTD researchers have designed a sensor for autonomous cleaning robots that can quantify the cleanliness of a given area.

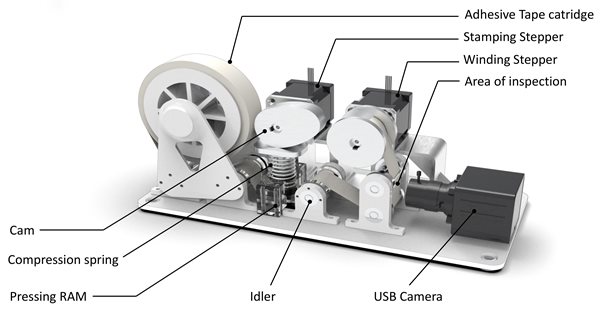

An overview of sample auditing sensors and its major components.

An overview of sample auditing sensors and its major components.

Over the years, cleaning robots have evolved from disc-shaped vacuum cleaners found in homes to advanced models that can navigate complex spaces like airports and train stations. As COVID-19 highlights the need to keep public spaces clean, how can we be sure that the areas covered by cleaning robots are indeed clean?

One way people determine if a surface is clean is by touching and visual inspection. While this might work for a dining table or home floor, it is not always practical nor safe for large public spaces. Another issue with this method is standardisation, raising the fundamental question: how clean is ‘clean’?

This ambiguity is what researchers from the Singapore University of Technology and Design (SUTD), supported by the National Robotics Programme, sought to address using autonomous robots that efficiently and systematically determine an area’s cleanliness. Their work, which includes a novel framework that can be used by robots to assess cleanliness and a strategy for exploration, was published in Sensors.

“Currently, there is no standard for estimating how clean is clean,” explained first author Mr Thejus Pathmakumar, PhD student at SUTD. “This research represents our first step towards a robotics solution.”

Taking inspiration from the touch-and-inspect method, the team designed a sensor that presses a white adhesive tape onto the floor and scans for dirt particles in the tape. By measuring the degree of dissimilarity between the photo of the tape before and after it was pressed, the team came up with a dirt score that can be assigned to the area. The sensor could also count the number of pixels corresponding to dirt on the photo of the tape, providing insight into the area’s dirt density.

“With this sensor that assigns a dirt score to an area using the touch-and-inspect analogy, what we need to do next is design the robot that could ‘touch’ a huge region,” said Pathmakumar.

One strategy is to let the robot roam everywhere, checking every nook and cranny of the area. But Pathmakumar noted that such a method is inefficient, as some regions may have higher concentrations of accumulated dirt, while others may not.

To make exploration smarter, Pathmakumar and colleagues programmed an algorithm that would encourage the robot to explore regions more likely to be dirty. Their dirt-probability-driven algorithm prompted the robot to notice changes in the floor’s visual patterns that may indicate dirt, after which the robot would be directed to navigate into the centre of the region.

To supplement this strategy, the team also used a frontier exploration algorithm so that the robot would prioritise unexplored areas.

“The frontier exploration algorithm is commonly used in applications like search and rescue,” Pathmakumar said. “We modified this algorithm so that while the robot would still be motivated to move towards the frontier, if it sees a region with high dirt probability, it will go there first.”

Based on data from the touch-and-inspect method, the robots quantified an area’s cleanliness with a cleaning benchmark score between 0 and 100, with scores closer to 100 corresponding to cleaner surfaces.

The team tested their cleaning audit robots on a mix of indoor and semi-outdoor areas. Their tests showed lower benchmark scores for the latter, as areas with coarse textures made dust particles less susceptible to being lifted by the tape. Transitioning between different floor textures also prompted the robot to falsely detect dirt, suggesting future aspects for improvement.

“In this work, we focused on the visual and tactile aspects that a robot would use to audit cleanliness,” explained SUTD Assistant Professor Mohan Rajesh Elara, principal investigator of the project and co-founder of robotics company Lionsbot. “In the future, we are looking to comprehensively audit the quality of cleaning, taking into account not just the visual and tactile aspects, but also the olfactory aspects and microbial density.”

Assistant Prof Elara, who works closely with the cleaning industry as well as the Singapore National Environment Agency, said that their cleaning audit system can come in a modular format. This way, it can be made into a handheld device used by a human manager or integrated into a robot that audits cleanliness even as it cleans.

“In the field of reconfigurable robotics, SUTD is rated number one in the world by the Web of Science, which benchmarks robotics research globally,” said Assistant Prof Elara. “I see this work as the next frontier in the field of cleaning robotics, and SUTD is leading the way.”

Acknowledgements:

This research is supported by the National Robotics Programme under its Robotics Enabling Capabilities and Technologies (Funding Agency Project No. 1922500051), National Robotics Programme under its Robot Domain Specific (Funding Agency Project No. 192 22 00058), National Robotics Programme under its Robotics Domain Specific (Funding Agency Project No. 1922200108), and administered by the Agency for Science, Technology and Research.

Reference:

An autonomous Robot-Aided Auditing scheme for floor cleaning. Sensors 21(13), 4332–4351. (DOI: 10.3390/s21134332)